Vision Transformers Market by Offering (Solutions, Professional Services), Application (Image Segmentation, Object Detection, Image Captioning), Vertical (Media & Entertainment, Retail & eCommerce, Automotive) and Region - Global Forecast to 2028

Updated on : Jan 27, 2026

Vision Transformers Market - Worldwide | Future Scope & Trends

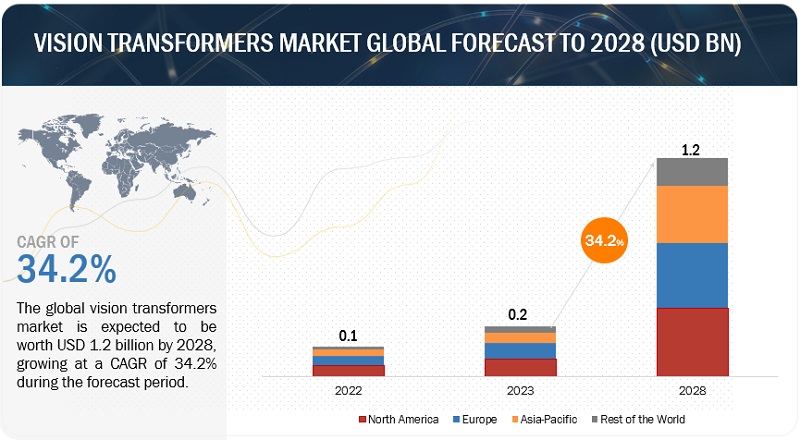

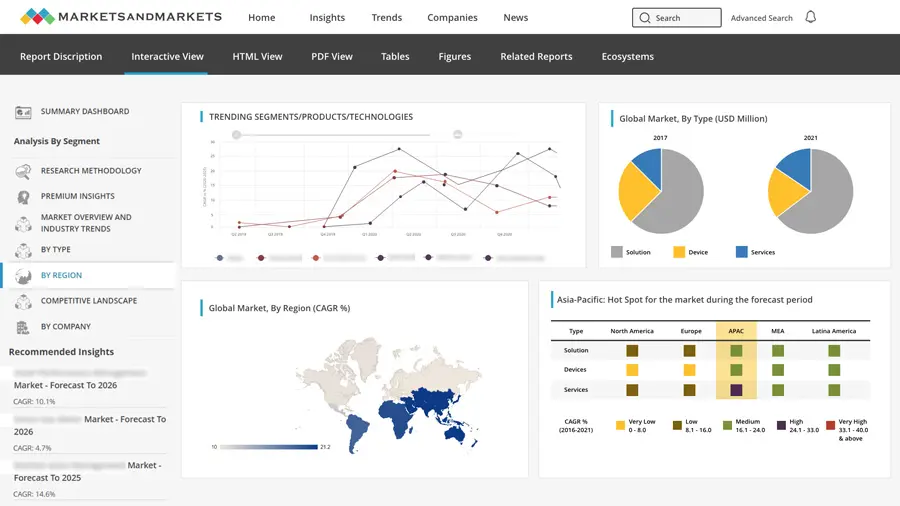

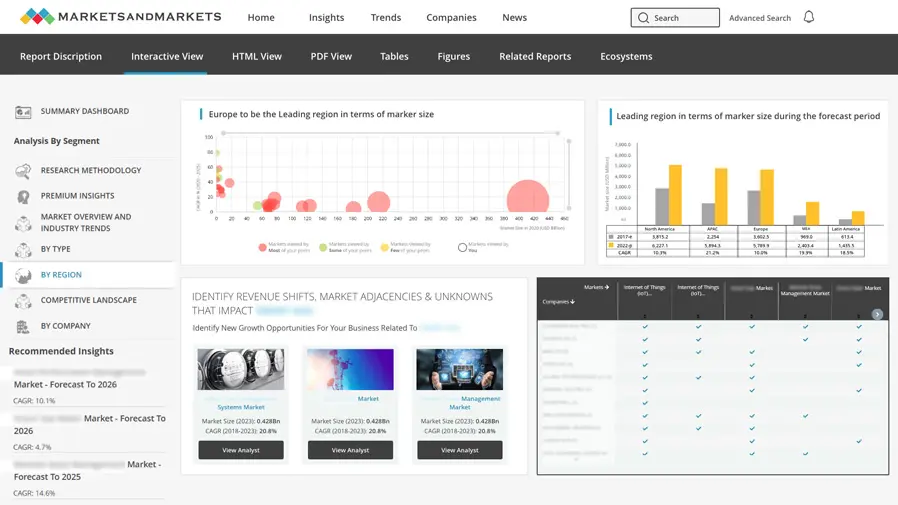

The vision transformers market is projected to grow significantly, increasing from USD 0.2 billion in 2023 to USD 1.2 billion by 2028, with a robust CAGR of 34.2%.

Some important factors that boost the growth of the vision transformers market include the increasing demand for attention mechanisms, transfer learning, and technology advancements. The increasing use of computer vision in various industries for tasks like image recognition, object detection, and video analysis is another factor for market growth. Several tech companies and startups are actively working on vision transformer models and applications, leading to increased innovation and competition in the market. This competition is driving advancements and expanding the market's offerings.

To know about the assumptions considered for the study, Request for Free Sample Report

To know about the assumptions considered for the study, download the pdf brochure

Recession Impact on the Vision Transformers Market

This report includes an analysis of the impact of the global recession on the vision transformers market. In this fast-changing environment, the exact effect of the downturn is unknown to the world. Hence, scenario-based approaches, such as rising interest rates, the weakening of currencies, and mounting public debt, have been considered to assess the economic impact and recovery period at the global level. The impact and recovery period for each country/region will be different.

Rising inflation, increasing interest rates, unemployment, and energy crises will lead to slow economic growth. As a result, end-user industries experience the deterioration of their businesses, cash flow, and ability to obtain financing, delaying or canceling product purchase plans. Similarly, vendors who provide electronic components to these OEMs experience similar problems, which impact their ability to fulfill orders or meet agreed service and quality levels.

The demand for components such as AI in computer vision in end-use markets depends primarily on CAPEX by operators/organizations for constructing, rebuilding, or upgrading their networking systems. The recession affects the amount of CAPEX spending and the company’s sales and profitability; there can be no assurance that existing capital spending will continue or that spending will not decrease during the economic downturn, which might harm the adoption of vision transformers hardware equipment such as CPUs, GPUs, ASICs, FPGAs, storage, and memory devices used in residential and industrial environments.

According to the World Economic Forum, adopting AI will create USD 13 trillion in value by 2030, and the current economic slowdown is likely to accelerate this trend as companies seek to streamline their operations and stay competitive. The World Bank has predicted that the global economic downturn will push enterprises toward employee layoffs and adopting AI-assisted tools; this would usher in a potential increase in investment in vision transformer solutions as businesses seek to leverage synthetically generated data to drive growth and competitiveness. Considering the persistent supply-chain disruption, supply-chain diversification and digitalization are the priorities for most organizations in the coming years.

Vision Transformers Market Dynamics

Driver: Increasing demand for automation

Automation is a driving force across industries, and vision transformation technologies play a pivotal role in automating tasks such as quality control, object detection, and visual inspection. Automation and efficiency can help businesses save time and resources and improve the accuracy of decision-making processes; this is why many industries are now implementing vision transformers to automate their processes and enhance efficiency.

In retail, computer vision systems track inventory levels and sales trends to aid retailers in making informed decisions about which products to stock and how to allocate resources. The medical field also benefits from vision transformers. For instance, computer vision systems can analyze medical images to help diagnose and treat diseases, reducing the time and resources required for manual analysis.

Restraint: High installation cost

The high cost of making image recognition systems could hinder the market’s growth. Most enabling technologies, such as face recognition, deep learning, computer vision, AI, ML, and gesture recognition, have substantial development costs. Thus, companies that lack financial resources do not opt for image recognition offerings even if they are interested in such solutions to increase productivity. Well-known vendor solutions such as Microsoft Computer Vision API, Microsoft Emotion API, Amazon Rekognition, Google Cloud Vision API, and IBM Watson Visual Recognition are highly priced, making it difficult for small companies to deploy them. The massive cost of implementing image recognition solutions and training AI enablers to execute specific tasks would deter small businesses; this could be a restraint for image recognition solution vendors.

Deploying vision transformers often requires specialized hardware, such as high-performance GPUs or TPUs, to efficiently process and infer on large-scale models. These hardware components can be expensive to purchase and install, especially considering the infrastructure needed to support them, such as servers or data center facilities. The initial investment in hardware can be a substantial barrier for organizations, notably smaller businesses or startups with limited budgets. High installation costs may delay or deter their adoption of vision transformers for image recognition tasks. In addition to hardware, installing image recognition solutions often involves infrastructure expenses; this includes the cost of setting up and maintaining data centers, cloud computing resources, or edge computing facilities capable of accommodating the computational demands of vision transformers. Deploying vision transformers and image recognition solutions in real-world environments may involve integration with existing systems, software development, and maintenance costs. These infrastructure expenses can strain an organization’s financial resources.

Opportunity: Integration of AI capabilities

Integrating AI techniques with vision transformation enables more advanced and accurate image analysis, enhancing decision-making processes in applications like autonomous vehicles and industrial automation. Major image recognition market players like Microsoft and their partners enable companies across different verticals to optimize business operations efficiently by transitioning from manual to AI-based operations. For example, Clobotics, a Chinese intelligent computer vision provider for retailers, has developed the Cloud Image Recognition solution that uses AI, advanced computer vision, and machine learning technologies to provide real-time insights on product placement, shelf optimization, product tracking, and planogram compliance through Microsoft Power BI. Microsoft’s Australia-based partner Lakeba has combined its computer vision technology with intelligent image capturing and Microsoft Azure, a cloud-based data analytics solution, to provide optimal on-shelf stock management. AI-powered image recognition solutions interpret humongous amounts of data and provide actionable insights that are impossible to achieve with human operations. Hence, the proliferation of AI and Machine Learning (ML) technology amalgamated with image recognition capabilities will create opportunities for vision transformers.

Challenge: Limited understanding and technical expertise in vision transformers

Limited understanding and technical expertise in vision transformers are critical challenges for the market. AI and computer vision are complex technologies that require specialized knowledge and skills to implement effectively; this can make it difficult for organizations to fully understand the capabilities and limitations of these systems and utilize them to solve real-world problems effectively. Additionally, vision transformer systems can be highly technical, requiring advanced programming skills and a deep understanding of mathematical algorithms and machine learning concepts. It makes it challenging for organizations to find and hire the technical expertise to develop and deploy these systems. Another challenge is the limited understanding of vision transformer systems’ ethical and societal implications. As these systems become more prevalent, organizations need to understand the potential impact that they may have on privacy, security, and other important societal values.

Despite these challenges, many initiatives are underway to address these limitations, such as educational programs and online courses to increase understanding and technical expertise in vision transformers. Additionally, as the field grows, more organizations will likely invest in developing the necessary technical expertise to implement these systems effectively. While limited understanding and technical expertise in vision transformers are challenges, there are opportunities for future growth and development. As more organizations invest in building their expertise and understanding of these technologies, they will likely become more widely adopted and utilized in various applications and industries.

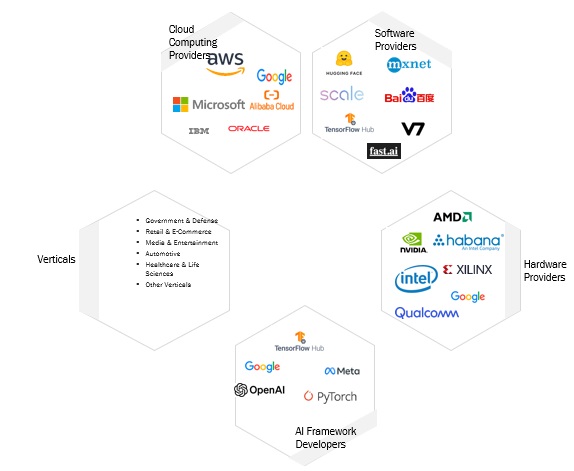

Vision Transformers Market Ecosystem

This section highlights the vision transformers ecosystem comprising software providers, hardware providers, AI framework developers, cloud computing providers, and verticals. Hardware providers such as NVIDIA, Intel, and AMD supply GPUs, TPUs, and other accelerators optimized for deep learning workloads. They are critical in enabling efficient vision transformer model training and inference. Software providers offer tools, libraries, and pre-trained models that facilitate developing, training, and deploying vision transformer models. Examples include Hugging Face Transformers and TensorFlow Hub. Cloud providers like AWS, Microsoft Azure, and Google Cloud offer cloud-based infrastructure, AI services, and GPU/TPU instances for vision transformer model development and deployment. Organizations like Facebook AI Research, Google AI, and OpenAI develop deep learning frameworks (e.g., PyTorch, TensorFlow) and libraries that support vision transformer model development. The verticals adopt vision transformer solutions to enhance their operations, automate tasks, and gain insights from visual data.

The deployment & integration segment will witness the second-highest CAGR during the forecast period based on professional service.

Deployment and integration services in the Vision Transformers market are specialized offerings aimed at helping organizations integrate vision transformer models into their existing systems, applications, and workflows, ensuring that they operate seamlessly and deliver value in real-world scenarios. These services involve the technical aspects of setting up and configuring vision transformer solutions for production use. Deployment services focus on taking vision transformer models from the development and training stage to operational use; this includes setting up the necessary infrastructure and environments to host the models. Service providers help organizations set up the hardware and software infrastructure for deploying Vision Transformers models.

Based on application, the image segmentation segment holds the largest market share in 2023.

Image segmentation in the vision transformers market divides images into meaningful and distinct segments or regions. Each element typically corresponds to a particular object, area, or category within the image. Vision transformers, which have gained popularity for their ability to capture complex relationships in visual data, are increasingly used for image segmentation tasks. Image segmentation is crucial in computer vision for various applications, including object detection, medical imaging, autonomous vehicles, and more. The goal is to assign each pixel in an image to a specific class or label, effectively partitioning the image into regions of interest. Vision transformers are neural network architectures initially designed for image classification but adapted for image segmentation tasks.

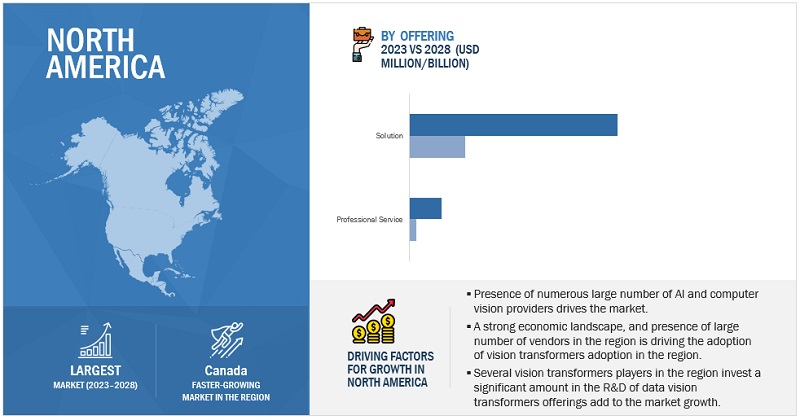

The US market contributes the largest share of North America’s vision transformers market during the forecast period.

The US is estimated to account for North America’s most significant share of the vision transformers market in 2023, and the trend will continue until 2028. Due to several factors, including advanced IT infrastructure, numerous businesses, and the availability of technical skills, it is the most developed market for adopting vision transformers. The United States has prominent technology hubs, including Silicon Valley, Seattle, Boston, and the San Francisco Bay Area. These regions host a concentration of tech companies, startups, and research institutions focused on artificial intelligence (AI) and computer vision, making them hotbeds for Vision transformers development and adoption. Leading US tech giants like Google, Facebook (now Meta), Microsoft, and Amazon actively engage in vision transformers research and development.

Key Market Players

The key technology vendors in the market include Google (US), OpenAI (US), Meta (US), AWS (US), NVIDIA Corporation (US), LeewayHertz (US), Synopsys (US), Hugging Face (US), Microsoft (US), Qualcomm (US), Intel (US), Clarifai (US), Quadric (US), Viso.ai (Switzerland), Deci (Israel), and V7 Labs (UK). Most key players have adopted partnerships and product developments to cater to the demand for vision transformers.

Scope of the Report

|

Report Metrics |

Details |

|

Market size available for years |

2022–2028 |

|

Base year considered |

2022 |

|

Forecast period |

2023–2028 |

|

Forecast units |

Million/Billion (USD) |

|

Segments Covered |

Offering, Application, Verticals |

|

Geographies Covered |

North America, Europe, Asia Pacific, and Rest of the World |

|

Companies Covered |

The key technology vendors in the market include Google (US), OpenAI (US), Meta (US), AWS (US), NVIDIA Corporation (US), LeewayHertz (US), and more. |

Vision Transformers Market Highlights

This research report categorizes the Vision Transformers Market to forecast revenues and analyze trends in each of the following submarkets:

|

Segment |

Subsegment |

|

Based on the Offering: |

|

|

Based on the Application |

|

|

Based on the Vertical: |

|

|

Based on Regions: |

|

Recent Developments:

- In October 2023, the Amazon SageMaker Model Registry now supports registering machine learning (ML) models stored in private Docker repositories. This feature enables the convenient monitoring of all ML models from various private repositories, whether AWS or non-AWS, within a single centralized service; this streamlines the management of ML operations (MLOps) and enhances ML governance, especially when dealing with a large-scale ML environment.

- In September 2023, with the introduction of OpenVINO version 2023.1, Intel extended the capabilities of Generative AI to everyday desktops and laptops, enabling the execution of these models in local, resource-limited settings; this empowers developers to experiment with and seamlessly integrate Generative AI into their applications.

- In August 2023, in the M110 version of Vertex AI workbench’s user-managed notebooks, the following enhancements have been incorporated:

- Inclusion of support for Tensorflow 2.13 with Python 3.10 on Debian 11.

- Introduction of support for Tensorflow 2.8 with Python 3.10 on Debian 11.

- Implementation of various software updates for improved performance and functionality

- In July 2023, Edge Impulse, a platform for creating, optimizing, and deploying machine learning models and algorithms on edge devices, revealed the integration of the latest NVIDIA TAO Toolkit 5.0 into its edge AI platform.

- In July 2023, NVIDIA introduced the TAO Toolkit 5.0, which brings several groundbreaking features to enhance AI model development. Key highlights of this release include the ability to export models in an open ONNX format, enabling deployment on various platforms, advanced training for vision transformers, AI-assisted data annotation for faster labeling of segmentation masks, support for new computer vision tasks and pre-trained models, and the open-source availability of customizable solutions. These enhancements empower developers to create more accurate and robust AI models while simplifying the development and integration processes. It enables users to improve the accuracy and robustness of Vision AI Apps with vision transformers and NVIDIA TAO.

- In June 2023, Hugging Face collaborated with AMD by including AMD in their Hardware Partner Program. AMD and Hugging Face collaborate to achieve top-tier transformer model performance on AMD’s CPUs and GPUs. This partnership holds significant promise for the broader Hugging Face community, as it will soon grant access to the latest AMD platforms for training and inference purposes.

- In March 2023, OpenAI released GPT-4, the latest version of its hugely popular AI chatbot ChatGPT. The new model can respond to images, for instance, by providing recipe suggestions from photos of ingredients and writing captions and descriptions. It can also process up to 25,000 words, about eight times as many as ChatGPT. OpenAI spent six months on the safety features of GPT-4 and trained it on human feedback. GPT-4 will initially be available to ChatGPT Plus subscribers, who pay USD 20 per month for premium access to the service. It is already powering Microsoft’s Bing search engine platform.

- In March 2023, With Azure OpenAI Service, over 1,000 customers are using the most advanced AI models—including Dall-E 2, GPT-3.5, Codex, and other large language models backed by Azure’s unique supercomputing and enterprise capabilities—to innovate in new ways. Now, with ChatGPT in preview in Azure OpenAI Service, developers can integrate custom AI-powered experiences directly into their applications, including enhancing existing bots to handle unexpected questions, recapping call center conversations to enable faster customer support resolutions, creating new ad copy with personalized offers, automating claims processing, and more.

Frequently Asked Questions (FAQ):

What are vision transformers?

Vision Transformers, often abbreviated as ViTs, are deep learning models used for computer vision tasks, such as image classification and object detection. They are an extension of the Transformer architecture, initially developed for natural language processing tasks, but have proven highly effective in various domains. Vision Transformers have gained popularity due to their ability to achieve state-of-the-art performance on various computer vision tasks and their capacity to handle large-scale datasets.

Which country is an early adopter of vision transformers?

The US is at the initial stage of adopting vision transformers.

What are the driving factors in the vision transformers market?

Factors include the increasing need for image recognition in the automotive industry, transfer learning playing a crucial role in adopting ViTs, technology advancements boosting the demand for image recognition among CPG and retail companies, the growing impact of AI in machine vision, and attention mechanisms driving the market growth.

Which are significant verticals adopting the vision transformers market?

Key verticals adopting the vision transformers market include: -

- Retail & eCommerce

- Media & Entertainment

- Automotive

- Government & Defense

- Healthcare & Life Sciences

- Other Verticals

Which are the key vendors exploring the vision transformers market?

The key technology vendors in the market include Google (US), OpenAI (US), Meta (US), AWS (US), NVIDIA Corporation (US), LeewayHertz (US), Synopsys (US), Hugging Face (US), Microsoft (US), Qualcomm (US), Intel (US), Clarifai (US), Quadric (US), Viso.ai (Switzerland), Deci (Israel), and V7 Labs (UK). Most key players have adopted partnerships and product developments to cater to the demand for vision transformers.

What is the total CAGR for the vision transformers market during the forecast years (2023-2028)?

The vision transformers market would record a CAGR of 34.2% during 2023-2028. .

To speak to our analyst for a discussion on the above findings, click Speak to Analyst

- 5.1 INTRODUCTION

-

5.2 MARKET DYNAMICSDRIVERS- Increasing demand for automation- Increasing need for vision transformers in automotive industry- Versatility and efficiency of transfer learning- Rapid technology advancements- Growing impact of AI in machine vision- Rapid adoption of attention mechanism- Continuous advancements in vision transformer architecturesRESTRAINTS- Concerns related to high computational intensity and resource requirements- High installation cost- Data annotation and privacy concernsOPPORTUNITIES- Increasing demand for big data analytics- Integration of AI capabilities with image recognition solutions- Development of machine learning pertaining to vision technology- Advancements in hardwareCHALLENGES- Limited understanding and technical expertise- Challenges related to large-scale data requirements- Stringent data privacy regulations

-

5.3 CASE STUDY ANALYSISPUBLIC SECTOR COMPANY DEPLOYED DECI’S AUTO NAC ENGINE TO ACCELERATE PRODUCT DEVELOPMENTLEEWAYHERTZ TEAMED UP WITH WINE COMPANY TO HELP CUSTOMERS MAKE INFORMED CHOICES ABOUT BUYING WINEHYUNDAI DEPLOYED AMAZON SAGEMAKER’S SOLUTIONS TO OPTIMIZE TRAINING AND DEVELOPMENTGENMAB ADOPTED V7’S SOLUTIONS TO STREAMLINE DATA ANNOTATION PROCESSESCELGENE ADOPTED AMAZON SAGEMAKER AND NVIDIA GPUS TO ACCELERATE ALGORITHM DEVELOPMENT, ALLOWING RESEARCHERS TO FOCUS ON CORE TASKS

- 5.4 SUPPLY CHAIN ANALYSIS

-

5.5 ECOSYSTEM ANALYSIS

-

5.6 TECHNOLOGY ANALYSISKEY TECHNOLOGIES- Natural Language Processing (NLP)- Computer VisionCOMPLEMENTARY TECHNOLOGIES- Cloud- IoT- Big DataADJACENT TECHNOLOGIES- Deep Learning Models- Machine Learning- Generative AI

-

5.7 PRICING ANALYSISAVERAGE SELLING PRICE TRENDS OF KEY PLAYERS, BY SOLUTIONINDICATIVE PRICING ANALYSIS OF VISION TRANSFORMER VENDORS, BY SOLUTION

-

5.8 PATENT ANALYSIS

-

5.9 PORTER’S FIVE FORCES ANALYSISTHREAT OF NEW ENTRANTSTHREAT OF SUBSTITUTESBARGAINING POWER OF SUPPLIERSBARGAINING POWER OF BUYERSINTENSITY OF COMPETITIVE RIVALRY

-

5.10 TARIFF AND REGULATORY LANDSCAPETARIFF ANALYSISREGULATIONS, BY REGIONREGULATORY BODIES, GOVERNMENT AGENCIES, AND OTHER ORGANIZATIONS- European Union (EU) – Artificial Intelligence Act (AIA)- Interim Administrative Measures for Generative Artificial Intelligence Services- General Data Protection Regulation- National Artificial Intelligence Initiative Act (NAIIA)- Information Security Technology – Personal Information Security Specification GB/T 35273-2017- Artificial Intelligence and Data Act (AIDA)- General Data Protection Law- Law No. 13 of 2016 on Protecting Personal Data- NIST Special Publication 800-144 – Guidelines on Security and Privacy in Public Cloud Computing

- 5.11 TRADE ANALYSIS

-

5.12 TRENDS/DISRUPTIONS IMPACTING BUYERS

-

5.13 KEY STAKEHOLDERS & BUYING CRITERIAKEY STAKEHOLDERS IN BUYING PROCESSBUYING CRITERIA

-

5.14 VISION TRANSFORMERS MARKET: BUSINESS MODEL ANALYSISSUBSCRIPTION BUSINESS MODELSERVICE BUSINESS MODEL

- 5.15 KEY CONFERENCES & EVENTS

-

6.1 INTRODUCTIONOFFERINGS: VISION TRANSFORMERS MARKET DRIVERS

-

6.2 SOLUTIONSSOFTWARE- Need for efficient vision data handling to drive market for vision transformer software solutions- Standalone vision transformer software packages- Vision transformer libraries and frameworksHARDWARE- Hardware supports development, training, and deployment of computer vision models

-

6.3 PROFESSIONAL SERVICESCONSULTING- Consulting services help organizations manage development, deployment, and maintenance of vision transformersDEPLOYMENT & INTEGRATION- Deployment and integration services enable organizations to make most of their investments in vision transformer solutionsTRAINING, SUPPORT, AND MAINTENANCE- High demand for training and support services from companies adopting vision transformers to drive market

-

7.1 INTRODUCTIONAPPLICATIONS: VISION TRANSFORMERS MARKET DRIVERS

-

7.2 IMAGE CLASSIFICATIONINCREASING ADOPTION OF IMAGE CLASSIFICATION ACROSS VARIOUS INDUSTRIES AND SECTORS TO BOOST GROWTHSINGLE-LABEL IMAGE CLASSIFICATIONFINE-GRAINED IMAGE CLASSIFICATIONMULTI-LABEL CLASSIFICATIONOTHERS

-

7.3 IMAGE CAPTIONINGIMAGE CAPTIONING HAS POTENTIAL TO REVOLUTIONIZE UTILIZATION OF VISUAL CONTENT ACROSS INDUSTRIESFEATURE EXTRACTIONMULTI-MODAL FUSIONOTHERS

-

7.4 IMAGE SEGMENTATIONUSE OF IMAGE SEGMENTATION IN WIDE RANGE OF APPLICATIONS, SUCH AS OBJECT DETECTION, MEDICAL IMAGING, AND AUTONOMOUS VEHICLES, TO DRIVE MARKETSEMANTIC SEGMENTATIONINSTANCE SEGMENTATIONOTHERS

-

7.5 OBJECT DETECTIONPRECISION AND EFFICIENCY OF VISION TRANSFORMER ARCHITECTURES TO DRIVE THEIR USE FOR OBJECT DETECTIONSINGLE SHOT DETECTORS (SSDS)REGION-BASED CONVOLUTIONAL NEURAL NETWORKS (R-CNN)OTHERS

- 7.6 OTHER APPLICATIONS

-

8.1 INTRODUCTIONVERTICALS: VISION TRANSFORMERS MARKET DRIVERS

-

8.2 RETAIL & ECOMMERCENEED FOR RETAILERS TO USE VISION TRANSFORMERS TO COLLECT AND ANALYZE DATA ON CUSTOMER BEHAVIOR TO BOOST MARKETVISUAL SEARCH & RECOMMENDATIONVIRTUAL TRY-ON & FITTING ROOMSAUTOMATED PRODUCT TAGGING & CATALOGINGOTHERS

-

8.3 MEDIA & ENTERTAINMENTDEMAND FOR OPTIMIZED CONTENT CREATION, MANAGEMENT, AND DELIVERY TO BOOST MARKET EXPANSIONCONTENT RECOMMENDATIONCONTENT MODERATIONVIDEO SUMMARIZATION & HIGHLIGHT EXTRACTIONOTHERS

-

8.4 AUTOMOTIVEINCREASING NEED TO IMPROVE ROAD SAFETY AND REDUCE MISHAPS TO DRIVE USE OF COMPUTER VISION SOLUTIONSAUTONOMOUS DRIVING & ADVANCED DRIVER ASSISTANCE SYSTEMS (ADAS)PEDESTRIAN & CYCLIST DETECTIONOTHERS

-

8.5 GOVERNMENT & DEFENSERISING NEED TO IMPROVE SITUATIONAL AWARENESS, SECURITY, AND OPERATIONAL EFFICIENCY TO DRIVE ADOPTION OF VISION TRANSFORMERS IN GOVERNMENT AND DEFENSE SECTORSBORDER SECURITY & SURVEILLANCEAERIAL & SATELLITE IMAGE ANALYSISOBJECT DETECTION & TRACKINGOTHERS

-

8.6 HEALTHCARE & LIFE SCIENCESVISION TRANSFORMERS ENHANCE MEDICAL ROBOTICS APPLICATIONS BY PROVIDING REAL-TIME VISUAL FEEDBACK AND GUIDANCE DURING PROCEDURESMEDICAL IMAGE ANALYSISDISEASE DETECTION & CLASSIFICATIONDRUG DISCOVERY & DRUG DEVELOPMENTOTHERS

- 8.7 OTHER VERTICALS

- 9.1 INTRODUCTION

-

9.2 NORTH AMERICANORTH AMERICA: VISION TRANSFORMERS MARKET DRIVERSNORTH AMERICA: RECESSION IMPACTUS- Growth in technology hubs across country, with participation from companies and research institutions, to drive marketCANADA- Growing innovation in healthcare applications and IT infrastructure to boost growth

-

9.3 EUROPEEUROPE: VISION TRANSFORMERS MARKET DRIVERSEUROPE: RECESSION IMPACTUK- Rise in investments by government in AI and computer vision technologies to spur market expansionGERMANY- German government to become technology’s global leader by galvanizing AI R&D and applicationFRANCE- Government initiatives to support adoption of vision and AI technologies to drive market growthITALY- Increasing government support and initiatives related to AI and technology innovation to encourage growthREST OF EUROPE

-

9.4 ASIA PACIFICASIA PACIFIC: VISION TRANSFORMERS MARKET DRIVERSASIA PACIFIC: RECESSION IMPACTCHINA- Rapid digitalization, economic growth, and increase in data consumption to propel market expansionJAPAN- Engagement of prominent tech companies in AI development to boost marketINDIA- Growth in initiatives to implement Industry 4.0 to encourage market expansionREST OF ASIA PACIFIC

-

9.5 REST OF THE WORLD (ROW)EXTENSIVE USE OF VISION TRANSFORMERS IN AGRICULTURE AND TO ADDRESS SECURITY CHALLENGES TO SPUR GROWTHROW: VISION TRANSFORMERS MARKET DRIVERSROW: RECESSION IMPACT

- 10.1 INTRODUCTION

- 10.2 KEY PLAYER STRATEGIES/RIGHT TO WIN

- 10.3 REVENUE ANALYSIS

- 10.4 MARKET SHARE ANALYSIS

- 10.5 BRAND COMPARISON/VENDOR PRODUCT LANDSCAPE

- 10.6 GLOBAL SNAPSHOT OF KEY MARKET PARTICIPANTS

-

10.7 COMPANY EVALUATION MATRIXSTARSEMERGING LEADERSPERVASIVE PLAYERSPARTICIPANTSCOMPANY FOOTPRINT

- 10.8 VALUATION AND FINANCIAL METRICS OF VISION TRANSFORMER VENDORS

-

10.9 KEY MARKET DEVELOPMENTSPRODUCT LAUNCHES & ENHANCEMENTSDEALS

- 11.1 INTRODUCTION

-

11.2 MAJOR PLAYERSGOOGLE- Business overview- Products/Solutions/Services offered- Recent developments- MnM viewOPENAI- Business overview- Products/Solutions/Services offered- Recent developments- MnM viewMETA- Business overview- Products/Solutions/Services offered- Recent developments- MnM viewAWS- Business overview- Products/Solutions/Services offered- Recent developments- MnM viewNVIDIA CORPORATION- Business overview- Products/Solutions/Services offered- Recent developments- MnM viewLEEWAYHERTZ- Business overview- Products/Services offeredSYNOPSYS- Business overview- Products/Solutions/Services offered- Recent developmentsHUGGING FACE- Business overview- Products/Solutions/Services offered- Recent developmentsMICROSOFT- Business overview- Products/Solutions/Services offered- Recent developmentsQUALCOMM- Business overview- Products/Solutions/Services offered- Recent developmentsINTEL- Business overview- Products/Solutions/Services offered- Recent developments

-

11.3 OTHER PLAYERSCLARIFAIQUADRICVISO.AIDECIV7 LABS

-

12.1 INTRODUCTIONRELATED MARKETSLIMITATIONS

- 12.2 GENERATIVE AI MARKET

- 12.3 AI IN COMPUTER VISION MARKET

- 13.1 DISCUSSION GUIDE

- 13.2 KNOWLEDGESTORE: MARKETSANDMARKETS’ SUBSCRIPTION PORTAL

- 13.3 CUSTOMIZATION OPTIONS

- 13.4 RELATED REPORTS

- 13.5 AUTHOR DETAILS

- TABLE 1 USD EXCHANGE RATES, 2018–2022

- TABLE 2 FACTOR ANALYSIS

- TABLE 3 VISION TRANSFORMERS MARKET SIZE AND GROWTH, 2022–2028 (USD MILLION, Y-O-Y GROWTH)

- TABLE 4 INDICATIVE PRICING ANALYSIS OF GOOGLE, BY SOLUTION

- TABLE 5 INDICATIVE PRICING ANALYSIS OF HUGGING FACE, BY SOLUTION

- TABLE 6 TOP 10 PATENT APPLICANTS (US)

- TABLE 7 IMPACT OF PORTER’S FIVE FORCES ON VISION TRANSFORMERS MARKET

- TABLE 8 MFN TARIFFS FOR HS CODE: 8471 EXPORTED BY US, 2022

- TABLE 9 MFN TARIFFS FOR HS CODE: 8471 EXPORTED BY AUSTRALIA, 2022

- TABLE 10 MFN TARIFFS FOR HS CODE: 8471 EXPORTED BY CANADA, 2022

- TABLE 11 NORTH AMERICA: REGULATORY BODIES, GOVERNMENT AGENCIES, AND OTHER ORGANIZATIONS

- TABLE 12 EUROPE: REGULATORY BODIES, GOVERNMENT AGENCIES, AND OTHER ORGANIZATIONS

- TABLE 13 ASIA PACIFIC: REGULATORY BODIES, GOVERNMENT AGENCIES, AND OTHER ORGANIZATIONS

- TABLE 14 MIDDLE EAST & AFRICA: REGULATORY BODIES, GOVERNMENT AGENCIES, AND OTHER ORGANIZATIONS

- TABLE 15 LATIN AMERICA: REGULATORY BODIES, GOVERNMENT AGENCIES, AND OTHER ORGANIZATIONS

- TABLE 16 IMPORT DATA, BY COUNTRY, 2018–2022 (USD MILLION)

- TABLE 17 EXPORT DATA, BY COUNTRY, 2018–2022 (USD MILLION)

- TABLE 18 INFLUENCE OF STAKEHOLDERS ON BUYING PROCESS FOR KEY VERTICALS

- TABLE 19 KEY BUYING CRITERIA FOR TOP VERTICALS

- TABLE 20 KEY CONFERENCES & EVENTS, 2023–2024

- TABLE 21 VISION TRANSFORMERS MARKET, BY OFFERING, 2022–2028 (USD MILLION)

- TABLE 22 MARKET, BY SOLUTION, 2022–2028 (USD MILLION)

- TABLE 23 SOLUTIONS: MARKET, BY REGION, 2022–2028 (USD MILLION)

- TABLE 24 SOFTWARE: MARKET, BY REGION, 2022–2028 (USD MILLION)

- TABLE 25 HARDWARE: MARKET, BY REGION, 2022–2028 (USD MILLION)

- TABLE 26 MARKET, BY PROFESSIONAL SERVICE, 2022–2028 (USD MILLION)

- TABLE 27 PROFESSIONAL SERVICES: VISION TRANSFORMERS MARKET, BY REGION, 2022–2028 (USD MILLION)

- TABLE 28 CONSULTING: MARKET, BY REGION, 2022–2028 (USD MILLION)

- TABLE 29 DEPLOYMENT & INTEGRATION: MARKET, BY REGION, 2022–2028 (USD MILLION)

- TABLE 30 TRAINING, SUPPORT, AND MAINTENANCE: MARKET, BY REGION, 2022–2028 (USD MILLION)

- TABLE 31 MARKET, BY APPLICATION, 2022–2028 (USD MILLION)

- TABLE 32 IMAGE CLASSIFICATION: MARKET, BY REGION, 2022–2028 (USD MILLION)

- TABLE 33 IMAGE CAPTIONING: MARKET, BY REGION, 2022–2028 (USD MILLION)

- TABLE 34 IMAGE SEGMENTATION: MARKET, BY REGION, 2022–2028 (USD MILLION)

- TABLE 35 OBJECT DETECTION: VISION TRANSFORMERS MARKET, BY REGION, 2022–2028 (USD MILLION)

- TABLE 36 OTHER APPLICATIONS: MARKET, BY REGION, 2022–2028 (USD MILLION)

- TABLE 37 MARKET, BY VERTICAL, 2022–2028 (USD MILLION)

- TABLE 38 RETAIL & ECOMMERCE: MARKET, BY REGION, 2022–2028 (USD MILLION)

- TABLE 39 MEDIA & ENTERTAINMENT: MARKET, BY REGION, 2022–2028 (USD MILLION)

- TABLE 40 AUTOMOTIVE: MARKET, BY REGION, 2022–2028 (USD MILLION)

- TABLE 41 GOVERNMENT & DEFENSE: MARKET, BY REGION, 2022–2028 (USD MILLION)

- TABLE 42 HEALTHCARE & LIFE SCIENCES: MARKET, BY REGION, 2022–2028 (USD MILLION)

- TABLE 43 OTHER VERTICALS: MARKET, BY REGION, 2022–2028 (USD MILLION)

- TABLE 44 MARKET, BY REGION, 2022–2028 (USD MILLION)

- TABLE 45 NORTH AMERICA: VISION TRANSFORMERS MARKET, BY OFFERING, 2022–2028 (USD MILLION)

- TABLE 46 NORTH AMERICA: MARKET, BY SOLUTION, 2022–2028 (USD MILLION)

- TABLE 47 NORTH AMERICA: MARKET, BY PROFESSIONAL SERVICE, 2022–2028 (USD MILLION)

- TABLE 48 NORTH AMERICA: MARKET, BY APPLICATION, 2022–2028 (USD MILLION)

- TABLE 49 NORTH AMERICA: MARKET, BY VERTICAL, 2022–2028 (USD MILLION)

- TABLE 50 NORTH AMERICA: MARKET, BY COUNTRY, 2022–2028 (USD MILLION)

- TABLE 51 US: MARKET, BY OFFERING, 2022–2028 (USD MILLION)

- TABLE 52 US: MARKET, BY APPLICATION, 2022–2028 (USD MILLION)

- TABLE 53 CANADA: VISION TRANSFORMERS MARKET, BY OFFERING, 2022–2028 (USD MILLION)

- TABLE 54 CANADA: MARKET, BY APPLICATION, 2022–2028 (USD MILLION)

- TABLE 55 EUROPE: MARKET, BY OFFERING, 2022–2028 (USD MILLION)

- TABLE 56 EUROPE: MARKET, BY SOLUTION, 2022–2028 (USD MILLION)

- TABLE 57 EUROPE: MARKET, BY PROFESSIONAL SERVICE, 2022–2028 (USD MILLION)

- TABLE 58 EUROPE: MARKET, BY APPLICATION, 2022–2028 (USD MILLION)

- TABLE 59 EUROPE: MARKET, BY VERTICAL, 2022–2028 (USD MILLION)

- TABLE 60 EUROPE: MARKET, BY COUNTRY, 2022–2028 (USD MILLION)

- TABLE 61 UK: VISION TRANSFORMERS MARKET, BY OFFERING, 2022–2028 (USD MILLION)

- TABLE 62 UK: MARKET, BY APPLICATION, 2022–2028 (USD MILLION)

- TABLE 63 GERMANY: MARKET, BY OFFERING, 2022–2028 (USD MILLION)

- TABLE 64 GERMANY: MARKET, BY APPLICATION, 2022–2028 (USD MILLION)

- TABLE 65 FRANCE: MARKET, BY OFFERING, 2022–2028 (USD MILLION)

- TABLE 66 FRANCE: MARKET, BY APPLICATION, 2022–2028 (USD MILLION)

- TABLE 67 ITALY: MARKET, BY OFFERING, 2022–2028 (USD MILLION)

- TABLE 68 ITALY: MARKET, BY APPLICATION, 2022–2028 (USD MILLION)

- TABLE 69 REST OF EUROPE: MARKET, BY OFFERING, 2022–2028 (USD MILLION)

- TABLE 70 REST OF EUROPE: MARKET, BY APPLICATION, 2022–2028 (USD MILLION)

- TABLE 71 ASIA PACIFIC: VISION TRANSFORMERS MARKET, BY OFFERING, 2022–2028 (USD MILLION)

- TABLE 72 ASIA PACIFIC: MARKET, BY SOLUTION, 2022–2028 (USD MILLION)

- TABLE 73 ASIA PACIFIC: MARKET, BY PROFESSIONAL SERVICE, 2022–2028 (USD MILLION)

- TABLE 74 ASIA PACIFIC: MARKET, BY APPLICATION, 2022–2028 (USD MILLION)

- TABLE 75 ASIA PACIFIC: MARKET, BY VERTICAL, 2022–2028 (USD MILLION)

- TABLE 76 ASIA PACIFIC: MARKET, BY COUNTRY, 2022–2028 (USD MILLION)

- TABLE 77 CHINA: MARKET, BY OFFERING, 2022–2028 (USD MILLION)

- TABLE 78 CHINA: MARKET, BY APPLICATION, 2022–2028 (USD MILLION)

- TABLE 79 JAPAN: MARKET, BY OFFERING, 2022–2028 (USD MILLION)

- TABLE 80 JAPAN: MARKET, BY APPLICATION, 2022–2028 (USD MILLION)

- TABLE 81 INDIA: VISION TRANSFORMERS MARKET, BY OFFERING, 2022–2028 (USD MILLION)

- TABLE 82 INDIA: MARKET, BY APPLICATION, 2022–2028 (USD MILLION)

- TABLE 83 REST OF ASIA PACIFIC: MARKET, BY OFFERING, 2022–2028 (USD MILLION)

- TABLE 84 REST OF ASIA PACIFIC: MARKET, BY APPLICATION, 2022–2028 (USD MILLION)

- TABLE 85 ROW: MARKET, BY OFFERING, 2022–2028 (USD MILLION)

- TABLE 86 ROW: MARKET, BY SOLUTION, 2022–2028 (USD MILLION)

- TABLE 87 ROW: MARKET, BY PROFESSIONAL SERVICE, 2022–2028 (USD MILLION)

- TABLE 88 ROW: MARKET, BY APPLICATION, 2022–2028 (USD MILLION)

- TABLE 89 ROW: VISION TRANSFORMERS MARKET, BY VERTICAL, 2022–2028 (USD MILLION)

- TABLE 90 OVERVIEW OF STRATEGIES ADOPTED BY KEY VISION TRANSFORMER VENDORS

- TABLE 91 MARKET: INTENSITY OF COMPETITIVE RIVALRY

- TABLE 92 BRAND COMPARISON/VENDOR PRODUCT LANDSCAPE

- TABLE 93 COMPANY FOOTPRINT, BY REGION

- TABLE 94 COMPANY FOOTPRINT, BY OFFERING

- TABLE 95 OVERALL COMPANY FOOTPRINT

- TABLE 96 MARKET: PRODUCT LAUNCHES & ENHANCEMENTS, 2022–2023

- TABLE 97 VISION TRANSFORMERS MARKET: DEALS, 2022–2023

- TABLE 98 GOOGLE: BUSINESS OVERVIEW

- TABLE 99 GOOGLE: PRODUCTS/SOLUTIONS/SERVICES OFFERED

- TABLE 100 GOOGLE: PRODUCT LAUNCHES AND ENHANCEMENTS

- TABLE 101 OPENAI: COMPANY OVERVIEW

- TABLE 102 OPENAI: PRODUCTS/SOLUTIONS/SERVICES OFFERED

- TABLE 103 OPENAI: PRODUCT LAUNCHES AND ENHANCEMENTS

- TABLE 104 OPENAI: DEALS

- TABLE 105 META: COMPANY OVERVIEW

- TABLE 106 META: PRODUCTS/SOLUTIONS/SERVICES OFFERED

- TABLE 107 META: PRODUCT LAUNCHES AND ENHANCEMENTS

- TABLE 108 AWS: COMPANY OVERVIEW

- TABLE 109 AWS: PRODUCTS/SOLUTIONS/SERVICES OFFERED

- TABLE 110 AWS: PRODUCT LAUNCHES AND ENHANCEMENTS

- TABLE 111 AWS: DEALS

- TABLE 112 NVIDIA CORPORATION: BUSINESS OVERVIEW

- TABLE 113 NVIDIA CORPORATION: PRODUCT/SOLUTION/SERVICE OFFERINGS

- TABLE 114 NVIDIA CORPORATION: PRODUCT LAUNCHES AND ENHANCEMENTS

- TABLE 115 NVIDIA CORPORATION: DEALS

- TABLE 116 LEEWAYHERTZ: BUSINESS OVERVIEW

- TABLE 117 LEEWAYHERTZ: PRODUCTS/SERVICES OFFERED

- TABLE 118 SYNOPSYS: BUSINESS OVERVIEW

- TABLE 119 SYNOPSYS: PRODUCTS/SERVICES OFFERED

- TABLE 120 SYNOPSYS: PRODUCT LAUNCHES & ENHANCEMENTS

- TABLE 121 HUGGING FACE: BUSINESS OVERVIEW

- TABLE 122 HUGGING FACE: PRODUCTS/SERVICES OFFERED

- TABLE 123 HUGGING FACE: PRODUCT LAUNCHES AND ENHANCEMENTS

- TABLE 124 HUGGING FACE: DEALS

- TABLE 125 MICROSOFT: BUSINESS OVERVIEW

- TABLE 126 MICROSOFT: PRODUCTS/SOLUTIONS/SERVICES OFFERED

- TABLE 127 MICROSOFT: PRODUCT LAUNCHES & ENHANCEMENTS

- TABLE 128 MICROSOFT: DEALS

- TABLE 129 QUALCOMM: BUSINESS OVERVIEW

- TABLE 130 QUALCOMM: PRODUCTS/SOLUTIONS OFFERED

- TABLE 131 QUALCOMM: PRODUCT LAUNCHES AND ENHANCEMENTS

- TABLE 132 QUALCOMM: DEALS

- TABLE 133 INTEL: BUSINESS OVERVIEW

- TABLE 134 INTEL: PRODUCTS/SOLUTIONS OFFERED

- TABLE 135 INTEL: PRODUCT LAUNCHES AND ENHANCEMENTS

- TABLE 136 INTEL: DEALS

- TABLE 137 GENERATIVE AI MARKET, BY OFFERING, 2019–2022 (USD MILLION)

- TABLE 138 GENERATIVE AI MARKET, BY OFFERING, 2023–2030 (USD MILLION)

- TABLE 139 GENERATIVE AI MARKET, BY SERVICE, 2019–2022 (USD MILLION)

- TABLE 140 GENERATIVE AI MARKET, BY SERVICE, 2023–2030 (USD MILLION)

- TABLE 141 GENERATIVE AI MARKET, BY DATA MODALITY, 2019–2022 (USD MILLION)

- TABLE 142 GENERATIVE AI MARKET, BY DATA MODALITY, 2023–2030 (USD MILLION)

- TABLE 143 GENERATIVE AI MARKET, BY REGION, 2019–2022 (USD MILLION)

- TABLE 144 GENERATIVE AI MARKET, BY REGION, 2023–2030 (USD MILLION)

- TABLE 145 AI IN COMPUTER VISION MARKET, BY COMPONENT, 2019–2022 (USD MILLION)

- TABLE 146 AI IN COMPUTER VISION MARKET, BY COMPONENT, 2023–2028 (USD MILLION)

- TABLE 147 AI IN COMPUTER VISION MARKET, BY HARDWARE TYPE, 2019–2022 (USD MILLION)

- TABLE 148 AI IN COMPUTER VISION MARKET, BY HARDWARE TYPE, 2023–2028 (USD MILLION)

- TABLE 149 AI IN COMPUTER VISION MARKET, BY SOFTWARE TYPE, 2019–2022 (USD MILLION)

- TABLE 150 AI IN COMPUTER VISION MARKET, BY SOFTWARE TYPE, 2023–2028 (USD MILLION)

- TABLE 151 AI IN COMPUTER VISION MARKET, BY FUNCTION, 2019–2022 (USD MILLION)

- TABLE 152 AI IN COMPUTER VISION MARKET, BY FUNCTION, 2023–2028 (USD MILLION)

- TABLE 153 AI IN COMPUTER VISION MARKET, BY APPLICATION, 2019–2022 (USD MILLION)

- TABLE 154 AI IN COMPUTER VISION MARKET, BY APPLICATION, 2023–2028 (USD MILLION)

- TABLE 155 AI IN COMPUTER VISION MARKET, BY END-USE INDUSTRY, 2019–2022 (USD MILLION)

- TABLE 156 AI IN COMPUTER VISION MARKET, BY END-USE INDUSTRY, 2023–2028 (USD MILLION)

- TABLE 157 AI IN COMPUTER VISION MARKET, BY REGION, 2019–2022 (USD MILLION)

- TABLE 158 AI IN COMPUTER VISION MARKET, BY REGION, 2023–2028 (USD MILLION)

- FIGURE 1 VISION TRANSFORMERS MARKET: RESEARCH DESIGN

- FIGURE 2 MARKET: TOP-DOWN AND BOTTOM-UP APPROACHES

- FIGURE 3 MARKET SIZE ESTIMATION METHODOLOGY: TOP-DOWN APPROACH

- FIGURE 4 MARKET SIZE ESTIMATION METHODOLOGY: BOTTOM-UP APPROACH

- FIGURE 5 MARKET: RESEARCH FLOW

- FIGURE 6 MARKET SIZE ESTIMATION METHODOLOGY (SUPPLY SIDE): ILLUSTRATION OF VENDOR REVENUE ESTIMATION

- FIGURE 7 MARKET SIZE ESTIMATION METHODOLOGY: SUPPLY-SIDE ANALYSIS

- FIGURE 8 BOTTOM-UP APPROACH FROM SUPPLY SIDE: COLLECTIVE REVENUE OF VENDORS

- FIGURE 9 MARKET: DEMAND-SIDE APPROACH

- FIGURE 10 VISION TRANSFORMERS MARKET: MARKET BREAKUP AND DATA TRIANGULATION

- FIGURE 11 GLOBAL MARKET TO WITNESS SIGNIFICANT GROWTH

- FIGURE 12 NORTH AMERICA TO ACCOUNT FOR LARGEST SHARE IN 2023

- FIGURE 13 FASTEST-GROWING SEGMENTS OF MARKET

- FIGURE 14 PROLIFERATION OF IMAGE AND VIDEO DATA ON INTERNET AND GROWTH OF DATA COLLECTION TECHNOLOGIES TO BOOST MARKET

- FIGURE 15 VISION TRANSFORMER SOLUTIONS TO ACCOUNT FOR LARGER MARKET SHARE IN 2023 AND 2028

- FIGURE 16 OBJECT DETECTION APPLICATION TO ACCOUNT FOR LARGEST SHARE DURING FORECAST PERIOD

- FIGURE 17 MEDIA & ENTERTAINMENT VERTICAL TO ACCOUNT FOR LARGEST SHARE IN 2023

- FIGURE 18 ASIA PACIFIC TO EMERGE AS LUCRATIVE MARKET FOR INVESTMENTS IN NEXT FIVE YEARS

- FIGURE 19 VISION TRANSFORMERS MARKET: DRIVERS, RESTRAINTS, OPPORTUNITIES, AND CHALLENGES

- FIGURE 20 SUPPLY CHAIN MAP

- FIGURE 21 ECOSYSTEM MAP

- FIGURE 22 GENERATIVE AI: STARTUP FUNDING

- FIGURE 23 NUMBER OF PATENTS PUBLISHED, 2012–2022

- FIGURE 24 TOP FIVE PATENT OWNERS (GLOBAL)

- FIGURE 25 PORTER’S FIVE FORCES ANALYSIS

- FIGURE 26 IMPORT DATA, BY COUNTRY, 2018–2022 (USD MILLION)

- FIGURE 27 EXPORT DATA, BY COUNTRY, 2018–2022 (USD MILLION)

- FIGURE 28 TRENDS/DISRUPTIONS IMPACTING BUYERS

- FIGURE 29 INFLUENCE OF STAKEHOLDERS ON BUYING PROCESS FOR KEY VERTICALS

- FIGURE 30 KEY BUYING CRITERIA FOR KEY VERTICALS

- FIGURE 31 VISION TRANSFORMERS MARKET: BUSINESS MODELS

- FIGURE 32 PROFESSIONAL SERVICES SEGMENT TO GROW AT HIGHER CAGR DURING FORECAST PERIOD

- FIGURE 33 SOFTWARE SEGMENT TO LEAD MARKET BY 2028

- FIGURE 34 CONSULTING SEGMENT TO GROW AT HIGHEST CAGR DURING FORECAST PERIOD

- FIGURE 35 IMAGE CAPTIONING SEGMENT TO GROW AT HIGHEST CAGR DURING FORECAST PERIOD

- FIGURE 36 MEDIA & ENTERTAINMENT SEGMENT TO ACCOUNT FOR LARGEST MARKET IN 2023

- FIGURE 37 NORTH AMERICA TO ACCOUNT FOR LARGEST MARKET SHARE DURING FORECAST PERIOD

- FIGURE 38 NORTH AMERICA: MARKET SNAPSHOT

- FIGURE 39 ASIA PACIFIC: MARKET SNAPSHOT

- FIGURE 40 HISTORICAL FIVE-YEAR SEGMENTAL REVENUE ANALYSIS OF KEY VISION TRANSFORMER PROVIDERS

- FIGURE 41 MARKET SHARE ANALYSIS, 2022

- FIGURE 42 VISION TRANSFORMERS MARKET: GLOBAL SNAPSHOT OF KEY MARKET PARTICIPANTS

- FIGURE 43 COMPANY EVALUATION MATRIX: CRITERIA WEIGHTAGE

- FIGURE 44 COMPANY EVALUATION MATRIX

- FIGURE 45 VALUATION AND FINANCIAL METRICS OF VISION TRANSFORMER VENDORS

- FIGURE 46 GOOGLE: COMPANY SNAPSHOT

- FIGURE 47 META: COMPANY SNAPSHOT

- FIGURE 48 AWS: COMPANY SNAPSHOT

- FIGURE 49 NVIDIA CORPORATION: COMPANY SNAPSHOT

- FIGURE 50 SYNOPSYS: COMPANY SNAPSHOT

- FIGURE 51 MICROSOFT: COMPANY SNAPSHOT

- FIGURE 52 QUALCOMM: COMPANY SNAPSHOT

- FIGURE 53 INTEL: COMPANY SNAPSHOT

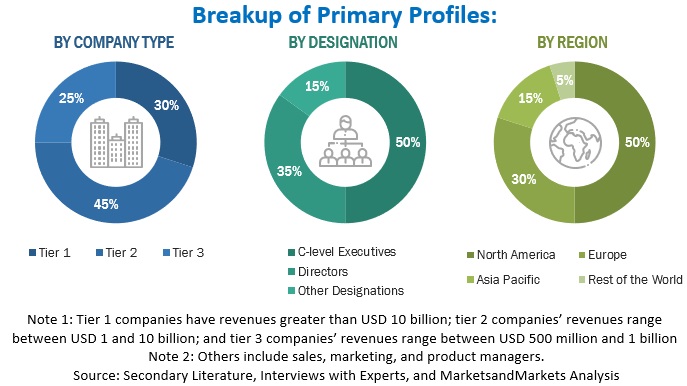

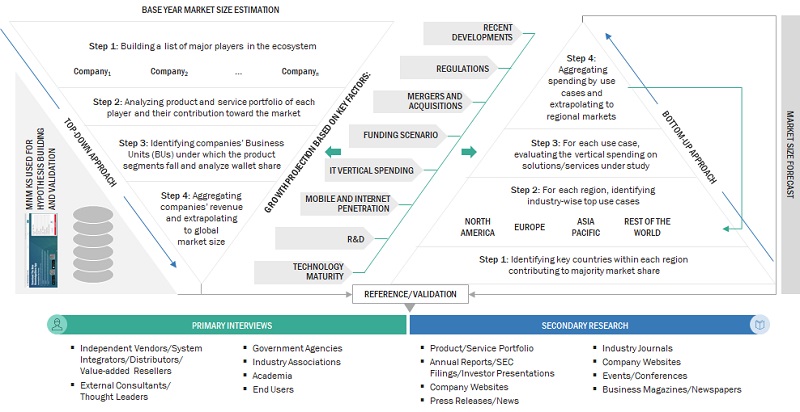

The study involved key activities in estimating the vision transformers market’s size. We conducted exhaustive secondary research to collect information on the current, adjacent, and parent market reports. The next step was to validate these assumptions, findings, and sizing with subject matter experts via primary research. We used the bottom-up approach to estimate the total market size. After that, we employed the market breakup and data triangulation procedures to estimate and forecast the market size of the segments/sub-segments of the vision transformers market.

Secondary Research

We determined the vision transformer market size based on the secondary data available via paid and unpaid information sources. It was also arrived at by analyzing the product portfolios of major companies and rating the companies based on their performance and quality.

In the secondary research process, we referred to various secondary sources for identifying and collecting information for this study. The secondary sources include press releases, annual reports, & investor presentations, white papers, certified publications, articles from recognized associations, and government publishing sources.

We used secondary research mainly to obtain the critical information related to the industry’s value chain and supply chain to identify the key players based on solutions, services, market classification, and segmentation according to offerings of the major players, industry trends related to solutions, services, verticals, and regions, and the key developments from both market- and technology-oriented perspectives.

Primary Research

We interviewed various sources from the supply and demand sides to obtain qualitative and quantitative information for this report in the immediate research process. The primary sources from the supply side included various industry experts, including Chief Experience Officers (CXOs); Vice Presidents (VPs); directors from business development, marketing, and product development/innovation teams; related vital executives from vision transformers vendors, industry associations, and independent consultants; and key opinion leaders.

We conducted primary interviews to gather insights, such as market statistics, the latest trends disrupting the market, new use cases implemented, data on revenue collected from products and services, market breakups, market size estimations, market forecasts, and data triangulation. Primary research also helped understand various technology trends, offerings, end users, and regions. Demand-side stakeholders, such as Chief Information Officers (CIOs), Chief Technology Officers (CTOs), Chief Security Officers (CSOs), and digital initiatives project teams, were interviewed to understand the buyer’s perspective on suppliers, products, service providers, and their current use, which would affect the overall vision transformers market.

To know about the assumptions considered for the study, download the pdf brochure

Market Size Estimation

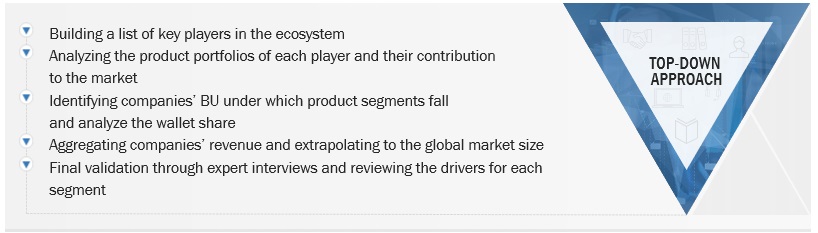

We used the top-down and bottom-up approaches to calculate the vision transformers market and subsegments. We finalized the vendors in the market via secondary research and their segment shares in regions/ countries through extensive market research. This procedure included studying major market players’ annual reports and extensive interviews with industry leaders.

Top-Down and Bottom-Up Approach of Vision Transformers Market

To know about the assumptions considered for the study, Request for Free Sample Report

Top-Down Approach of Vision Transformers Market

Data Triangulation

In the market estimation process, we split the market into segments and subsegments after arriving at the total market size. We followed data triangulation and market breakdown procedures to determine each market segment’s and subsegment’s size. The data was triangulated by studying factors and trends from the demand and supply in media & entertainment, retail & e-commerce, healthcare & life sciences, automotive, government & defense, and other verticals.

Market Definition

Vision Transformers, often abbreviated as ViTs, are deep learning models used for computer vision tasks, such as image classification and object detection. They are an extension of the transformer architecture, initially developed for natural language processing tasks, but have proven highly effective in various domains. Vision transformers have gained popularity due to their ability to achieve state-of-the-art performance on various computer vision tasks and their capacity to handle large-scale datasets.

Key Stakeholders

The vision transformers market consists of the following stakeholders:

- IT service providers

- Vision Transformers solution vendors

- Vision Transformers service vendors

- Managed service providers

- Support and maintenance service providers

- System integrators (SIs)/Migration service providers

- Value-added resellers (VARs) and distributors

- Independent software vendors (ISVs)

- Third-party providers

- Technology providers

Report Objectives

- To define, describe, and forecast the vision transformers market based on offering, application, vertical, and region

- To provide detailed information about the major factors (drivers, opportunities, restraints, and challenges) influencing the growth of the market

- To analyze the opportunities in the market for stakeholders by identifying the high-growth segments of the market

- To forecast the size of the market segments concerning critical regions: North America, Europe, Asia Pacific, and Rest of the World

- To analyze the subsegments of the market concerning individual growth trends, prospects, and contributions to the overall market

- To profile the key players of the market and comprehensively analyze their market size and core competencies

- To track and analyze the competitive developments, such as product enhancements and product launches, acquisitions, and partnerships and collaborations, in the vision transformers market globally

Available Customizations

With the given market data, MarketsandMarkets offers customizations per the company’s specific needs. The following customization options are available for the report:

Product Analysis

- The product matrix provides a detailed comparison of the product portfolio of each company.

Geographic Analysis

- Further breakup of the Asia Pacific market into countries contributing 75% to the regional market size

- Further breakup of the North American market into countries contributing 75% to the regional market size

- Further breakup of the European market into countries contributing 75% to the regional market size

Company Information

- Detailed analysis and profiling of additional market players (up to 5)

Growth opportunities and latent adjacency in Vision Transformers Market