US AI inference Market

US AI Inference Market by Compute (GPU, CPU, FPGA), Memory (DDR, HBM), Network (NICs/Network Adapters, Interconnects), Deployment (On-premises, Cloud, Edge), Application (Generative AI, Machine Learning, NLP, Computer Vision) - Forecast to 2030

OVERVIEW

Source: Secondary Research, Interviews with Experts, MarketsandMarkets Analysis

The US AI inference market is projected to reach USD 77.61 billion by 2030 from USD 32.32 billion in 2025, at a CAGR of 19.1% during the forecast period. The market is expanding rapidly, driven by advances in generative AI (Gen AI) and large language models (LLMs). Demand for AI inference will increase as enterprises focus on real-time GenAI deployment and hyperscalers expand infrastructure to support compute-heavy, data-driven decision-making worldwide, thereby boosting market growth.

KEY TAKEAWAYS

-

By ComputeThe CPU segment is projected to register the highest CAGR of 31.4% from 2025 to 2030, reflecting critical role in general-purpose processing and increasing deployment in edge and cloud-based inference systems.

-

By MemoryHBM (high-bandwidth memory) held the largest market share (71.0%) in 2024.

-

By NetworkThe NICs/network adapters segment is expected to grow at a CAGR of 42.0% between 2025 and 2030.

-

By ApplicationThe Generative AI application is expected to grow at highest CAGR during the forecast period.

-

Competitive Landscape - Star PlayersNVIDIA Corporation, Intel Corporation, and AMD were identified as star players in the US AI inference market, given their strong market share, extensive product portfolios, and long-standing commitment to innovation in AI-specific hardware.

-

Competitive Landscape - StartupsGraphcore and Cerebras have distinguished themselves among startups and SMEs by securing strong footholds in specialized niche areas, underscoring their potential as emerging market leaders in AI inference technologies.

The US AI inference market is experiencing robust growth due to the advancements in AI hardware, increasing adoption across multiple sectors, rapid expansion of edge AI applications, and substantial investments in research and development by leading technology companies.

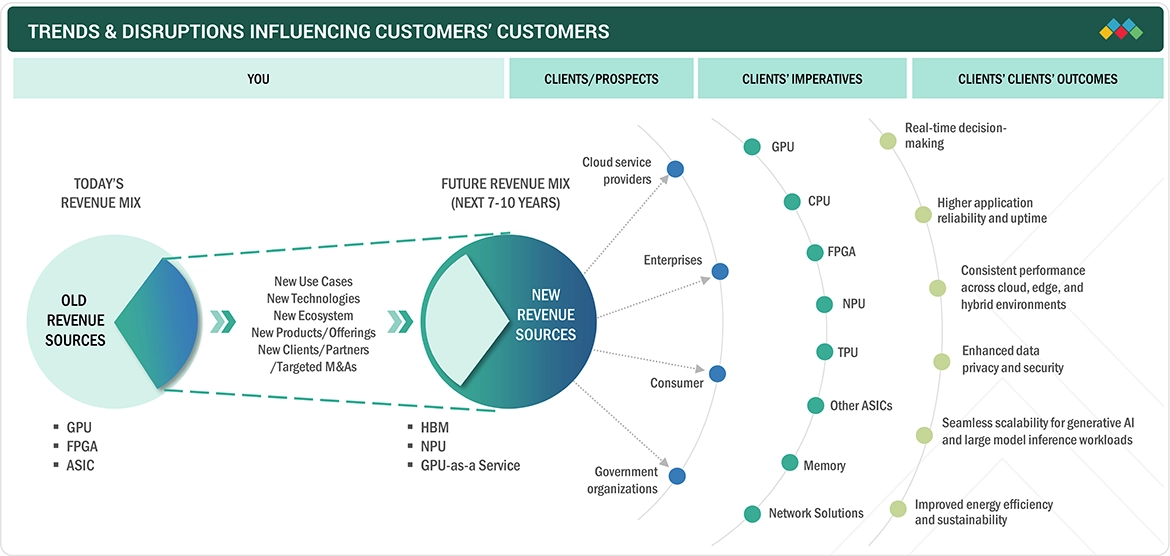

TRENDS & DISRUPTIONS IMPACTING CUSTOMERS' CUSTOMERS

The evolving AI inference market is reshaping end-user outcomes by enabling faster, more efficient, and scalable deployment of AI workloads across cloud, edge, and hybrid environments. As enterprises adopt advanced chips, such as GPU (Graphics Processing Unit), NPU (Neural Processing Unit), and ASIC (Application-Specific Integrated Circuit), the focus is shifting toward delivering real-time insights, improved personalization, and reliable application performance. These advancements are further supported by energy-efficient processing and secure data handling, allowing organizations to reduce operational costs while meeting regulatory requirements. Additionally, the ability to scale generative AI workloads and accelerate deployment cycles is enabling businesses to bring AI-driven products to market faster, ultimately enhancing customer experience and driving measurable business value.

Source: Secondary Research, Interviews with Experts, MarketsandMarkets Analysis

MARKET DYNAMICS

Level

-

Growing demand for real-time data processing capabilities across edge devices

-

Growth of advanced cloud platforms offering specialized AI inference services

Level

-

Computational workload and high-power consumption

-

Shortage of skilled workforce

Level

-

Growth of AI-enabled healthcare and diagnostics

-

Advancements in natural language processing for improved customer experience

Level

-

Data privacy concerns

-

Supply chain disruptions

Source: Secondary Research, Interviews with Experts, MarketsandMarkets Analysis

Driver: Growing demand for real-time data processing capabilities across edge devices

The increasing adoption of edge computing in the US is driving the demand for real-time AI inference capabilities, particularly in applications such as autonomous systems, smart manufacturing, and healthcare monitoring. Enterprises are prioritizing low-latency processing to enable faster decision-making, reduce cloud dependency, and improve operational efficiency, accelerating the growth of AI inference solutions at the edge.

Restraint: Computational workload and high-power consumption

AI inference workloads, especially for complex models, require substantial computational resources, leading to high power consumption and increased operational costs. In the US, this creates challenges for enterprises aiming to scale AI deployments efficiently, particularly in energy-constrained environments, limiting widespread adoption despite growing demand.

Opportunity: Growth of AI-enabled healthcare and diagnostics

The rapid expansion of AI-driven healthcare applications in the US presents significant opportunities for the AI inference market. From medical imaging and diagnostics to remote patient monitoring, AI inference enables faster and more accurate clinical decisions, improving patient outcomes while supporting the growing demand for advanced, data-driven healthcare solutions.

Challenge: Data privacy concerns

Data privacy and security concerns remain a critical challenge in the US AI inference market, especially with stringent regulations surrounding sensitive data such as healthcare and financial information. Organizations must ensure compliance with evolving data protection laws while maintaining model performance, which increases complexity and may slow down AI adoption.

US AI INFERENCE MARKET: COMMERCIAL USE CASES ACROSS INDUSTRIES

| COMPANY | USE CASE DESCRIPTION | BENEFITS |

|---|---|---|

|

AI-powered radiation therapy optimization with Intel Corporation and Siemens Healthineers | Achieved a 35x speedup in AI inference time compared with 3rd Gen Intel Xeon Scalable processors | 200 milliseconds in contouring typical abdominal scan with nine structures | Freeing up CPU resources | Efficiency | Low energy consumption |

|

Artificial Intelligence accelerates dark matter search with Advanced Micro Devices, Inc. FPGAs | Achieved 100 ns AI inference latency with improved trigger identification for dark matter detection | Accelerating algorithm development from months to a day |

|

Serving inference for LLMs - NVIDIA Triton inference server and Eleuther AI | Decreased latency by up to 40% for Eleuther AI’s models | High-performance, cost-effective solution that scales efficiently with demand | Competitiveness in rapidly changing AI landscape |

|

Finch computing reduces inference costs using AWS Inferentia for language translation | Cut inference costs by over 80% | Added support for three more languages | Accelerated time to market for new models | Attracted new customers while keeping throughput and response times stable |

Logos and trademarks shown above are the property of their respective owners. Their use here is for informational and illustrative purposes only.

MARKET ECOSYSTEM

The US AI inference ecosystem includes designers, capital equipment providers, manufacturers, and end users. Each of these stakeholders works to advance AI inference by sharing knowledge, resources, and expertise to foster innovation in this field. Manufacturers such as NVIDIA Corporation (US), Advanced Micro Devices, Inc. (US), and Intel Corporation (US) are central to the AI inference market. They are responsible for developing AI inference hardware for various applications.

Logos and trademarks shown above are the property of their respective owners. Their use here is for informational and illustrative purposes only.

MARKET SEGMENTS

Source: Secondary Research, Interviews with Experts, MarketsandMarkets Analysis

US AI Inference Market, by Application

Generative AI applications are projected to register the highest CAGR in the US AI inference market due to rapid adoption across industries such as healthcare, media, finance, and customer service. The rising use of large language models, image generation, and code generation tools is driving the demand for high-performance inference infrastructure. Additionally, increasing enterprise investments, growing need for real-time content generation, and continuous advancements in AI models are accelerating deployment, making generative AI a key driver in the market.

US AI Inference Market, by Compute

GPUs lead the US AI inference market due to their superior parallel processing capabilities, which enable faster execution of complex AI models compared to traditional processors. They are widely adopted across data centers, cloud platforms, and enterprise applications for handling large-scale inference workloads efficiently. Strong ecosystem support, continuous innovation, and compatibility with leading AI frameworks further drive their dominance, while the increasing demand for generative AI and real-time analytics reinforces GPUs as the preferred choice for high-performance inference.

US AI Inference Market, by Memory

High bandwidth memory (HBM) dominates the US AI inference market due to its ability to deliver extremely high data throughput and low latency, critical for real-time AI workloads. It significantly improves performance-per-watt in GPUs and accelerators, making it ideal for large-scale inference deployments in data centers and edge AI applications.

US AI Inference Market, by Deployment

Cloud deployment holds the largest share in the US AI inference market due to its scalability, flexibility, and cost efficiency. It enables enterprises to access high-performance computing resources on demand, supports rapid model deployment, and reduces infrastructure costs. These attributes make it ideal for handling dynamic AI workloads and large-scale inference operations.

US AI Inference Market, by End User

Enterprises are projected to grow at the highest CAGR in the US AI inference market during the forecast period due to the increasing adoption of AI-driven applications across business operations, including customer analytics, automation, and decision-making. Rising investments in digital transformation, coupled with the need for real-time insights and competitive differentiation, are accelerating enterprise-level deployment of AI inference solutions.

US AI INFERENCE MARKET: COMPANY EVALUATION MATRIX

NVIDIA Corporation, recognized as a leading player in the US AI Inference market, offers a comprehensive portfolio that includes graphics processing units specifically designed for AI workloads, software platforms, such as NVIDIA AI Enterprise, and inference optimization tools. The company boasts dominant market share in AI hardware and collaborates extensively with cloud service providers, automotive manufacturers, and enterprises across all major industries. Cerebras, on the other hand, is seen as an emerging leader, holding a significant market share but with a smaller product footprint, which indicates strong growth potential as it expands its AI inference offerings.

Source: Secondary Research, Interviews with Experts, MarketsandMarkets Analysis

KEY MARKET PLAYERS

- NVIDIA Corporation (US)

- Intel Corporation (US)

- AMD (US)

- Apple Inc. (US)

- Google (US)

- Amazon Web Services, Inc. (US)

- Microsoft (US)

- Meta (US)

- Graphcore (UK)

- Cerebras (US)

MARKET SCOPE

| REPORT METRIC | DETAILS |

|---|---|

| Market Size in 2024 (Value) | USD 23.23 Billion |

| Market Forecast in 2030 (Value) | USD 77.61 Billion |

| Growth Rate | CAGR of 19.1% from 2025-2030 |

| Years Considered | 2021-2030 |

| Base Year | 2024 |

| Forecast Period | 2025-2030 |

| Units Considered | Value (USD Million/Billion), Volume (Thousand Units) |

| Report Coverage | Revenue Forecast, Company Ranking, Competitive Landscape, Growth Factors, and Trends |

| Segments Covered |

|

WHAT IS IN IT FOR YOU: US AI INFERENCE MARKET REPORT CONTENT GUIDE

DELIVERED CUSTOMIZATIONS

We have successfully delivered the following deep-dive customizations:

| CLIENT REQUEST | CUSTOMIZATION DELIVERED | VALUE ADDS |

|---|---|---|

| Cloud Infrastructure Provider | Competitive analysis of inference accelerator architectures (GPUs, TPUs, ASICs, FPGAs) with performance benchmarking, cost-per-inference metrics, and deployment scalability assessments |

|

| Edge AI Chipset Manufacturer | Regional market sizing for edge inference solutions across automotive, industrial IoT, smart devices, and retail sectors with device deployment forecasts and power-performance requirements |

|

| Enterprise AI Platform Vendor | Deep-dive into model optimization techniques (quantization, pruning, distillation), inference runtime frameworks, and deployment toolchains across cloud and edge environments |

|

| Telecommunications Operator | Country-specific analysis of network edge inference deployment models, latency requirements by use case, regulatory considerations, and competitive positioning strategies |

|

| AI Software Startup | Comprehensive supplier ecosystem mapping for inference hardware, software frameworks, model optimization tools, and integration partnerships with technology maturity assessment |

|

RECENT DEVELOPMENTS

- October 2024 : Advanced Micro Devices, Inc. introduced the 5th Gen AMD EPYC processors for AI, cloud, and enterprise applications. They deliver maximum GPU acceleration, improved per-server performance, and enhanced AI inference capabilities. AMD EPYC 9005 processors offer dense and high-performance solutions for cloud workloads.

- October 2024 : Intel Corporation and Inflection AI teamed up to speed up AI adoption for businesses and developers by launching Inflection for Enterprise, an enterprise-grade AI platform. Powered by Intel Gaudi and Intel Tiber AI Cloud, this system provides customizable, scalable AI features, allowing companies to deploy AI co-workers trained on their specific data and policies.

- March 2024 : NVIDIA Corporation introduced the NVIDIA Blackwell platform to help organizations build and run real-time generative AI, featuring six innovative technologies for accelerated computing. It supports AI training and real-time LLM inference for models up to 10 trillion parameters.

Table of Contents

Methodology

The research process for this technical, market-oriented, and commercial study of the US AI inference market included the systematic gathering, recording, and analysis of data about companies operating in the market. It involved the extensive use of secondary sources, directories, and databases (Factiva, Oanda, and OneSource) to identify and collect relevant information. In-depth interviews were conducted with various primary respondents, including experts from core and related industries and preferred manufacturers, to obtain and verify critical qualitative and quantitative information as well as to assess the growth prospects of the market. Key players in the US AI inference market were identified through secondary research, and their market rankings were determined through primary and secondary research. This included studying annual reports of top players and interviewing key industry experts, such as CEOs, directors, and marketing executives.

Secondary Research

In the secondary research process, various secondary sources were used to identify and collect information for this study. These include annual reports, press releases, and investor presentations of companies, whitepapers, certified publications, and articles from recognized associations and government publishing sources. Research reports from a few consortiums and councils were also consulted to structure qualitative content. Secondary sources included corporate filings (such as annual reports, investor presentations, and financial statements); trade, business, and professional associations; white papers; Journals and certified publications; articles by recognized authors; gold-standard and silver-standard websites; directories; and databases. Data was also collected from secondary sources, such as the International Trade Centre (ITC), and the International Monetary Fund (IMF).

List of key secondary sources

|

Source |

Web Link |

|

European Association for Artificial Intelligence |

https://eurai.org/ |

|

Association for Machine Learning and Application (AMLA) |

https://www.icmla-conference.org/ |

|

Association for the Advancement of Artificial Intelligence |

https://aaai.org/ |

|

Generative AI Association (GENAIA) |

https://www.generativeaiassociation.org/ |

|

International Monetary Fund |

https://www.umaconferences.com/ |

|

Institute of Electrical and Electronics Engineers (IEEE) |

https://ieeexplore.ieee.org/ |

Primary Research

Extensive primary research was accomplished after understanding and analyzing the US AI inference market scenario through secondary research. Several primary interviews were conducted with key opinion leaders from both demand- and supply-side vendors across four major regions—North America, Europe, Asia Pacific, and RoW. Approximately 30% of the primary interviews were conducted with the demand side, and 70% with the supply side. Primary data was collected through questionnaires, emails, and telephonic interviews. Various departments within organizations, such as sales, operations, and administration, were contacted to provide a holistic viewpoint in the report.

To know about the assumptions considered for the study, download the pdf brochure

Market Size Estimation

In the complete market engineering process, top-down and bottom-up approaches and several data triangulation methods have been used to perform the market size estimation and forecasting for the overall market segments and subsegments listed in this report. Extensive qualitative and quantitative analyses have been performed on the complete market engineering process to list the key information/insights throughout the report. The following table explains the process flow of the market size estimation.

The key players in the market were identified through secondary research, and their rankings in the respective regions determined through primary and secondary research. This entire procedure involved the study of the annual and financial reports of top players, and interviews with industry experts such as chief executive officers, vice presidents, directors, and marketing executives for quantitative and qualitative key insights. All percentage shares, splits, and breakdowns were determined using secondary sources and verified through primary sources. All parameters that affect the markets covered in this research study were accounted for, viewed in extensive detail, verified through primary research, and analyzed to obtain the final quantitative and qualitative data. This data was consolidated, supplemented with detailed inputs and analysis from MarketsandMarkets, and presented in this report.

US AI inference market: Bottom-Up Approach

- Initially, the companies offering AI Inference were identified. Their products were mapped based on compute, memory, network, deployment, application and end user.

- After understanding the different types of AI Inference offereing by various manufacturers, the market was categorized into segments based on the data gathered through primary and secondary sources.

- To derive the US AI inference market, global server shipments of top players for AI servers considered in the report's scope were tracked.

- A suitable penetration rate was assigned for compute, memory, network offerings to derive the shipments of AI Inference.

- We derived the US AI inference market based on different offerings using the average selling price (ASP) at which a particular company offers its devices. The ASP of each offering was identified based on secondary sources and validated from primaries.

- For the CAGR, the market trend analysis was carried out by understanding the industry penetration rate and the demand and supply of AI Inference offerings for different end users.

- The US AI inference market is also tracked through the data sanity method. The revenues of key providers were analyzed through annual reports and press releases and summed to derive the overall market.

- For each company, a percentage is assigned to its overall revenue or, in a few cases, segmental revenue to derive its revenue for the AI Inference. This percentage for each company is assigned based on its product portfolio and range of AI Inference offerings.

- The estimates at every level, by discussing them with key opinion leaders, including CXOs, directors, and operation managers, have been verified and cross-checked, and finally, with the domain experts at MarketsandMarkets.

- Various paid and unpaid sources of information, such as annual reports, press releases, white papers, and databases, have been studied.

US AI inference market: Top-Down Approach

- The global market size of AI Inference was estimated through the data sanity of major companies.

- The growth of the US AI inference market witnessed an upward trend during the studied period, as it is currently in the initial stage of the product cycle, with major players beginning to expand their business into various application areas of the market.

- Types of AI Inference offerings, their features and properties, geographical presence, and key applications served by all players in the US AI inference market were studied to estimate and arrive at the percentage split of the segments.

- Different types of AI Inference offerings, such as compute, memory, and network and their penetration for end users were also studied.

- Based on secondary research, the market split for AI Inference by compute, memory, network, deployment, application and end user was estimated.

- The demand generated by companies operating in different end users segments was analyzed.

- Multiple discussions with key opinion leaders across major companies involved in developing the AI Inference offerings and related components were conducted to validate the market split of compute, memory, network, deployment, application and end user.

- The regional splits were estimated using secondary sources based on factors such as the number of players in a specific country and region and the adoption and use cases of each implementation type with respect to applications in the region.

Data Triangulation

After arriving at the overall size of the US AI inference market through the process explained above, the overall market has been split into several segments. Data triangulation procedures have been employed to complete the overall market engineering process and arrive at the exact statistics for all the segments, wherever applicable. The data has been triangulated by studying various factors and trends from both the demand and supply sides. The market has also been validated using both top-down and bottom-up approaches.

Market Definition

AI inference is the process of using a trained artificial intelligence (AI) model to make predictions, classify data, or extract insights from new, unseen input data. It involves applying a model to tasks like image recognition, language processing, or real-time analytics. Optimized for efficiency and speed, AI inference often runs on specialized hardware, enabling applications from autonomous systems to personalized recommendations. It encompasses a combination of high-performance computing resources (e.g., GPUs, CPUs, FPGAs, etc.), memory solutions (e.g., DDR, HBM), networking components (e.g., network adapters, interconnects) optimized for handling AI workloads. It is utilized in generative AI, machine learning, natural language processing (NLP), and computer vision applications.

Key Stakeholders

- Government and financial institutions and investment communities

- Analysts and strategic business planners

- Semiconductor product designers and fabricators

- Application providers

- AI solution providers

- AI platform providers

- AI system providers

- Manufacturers and AI technology users

- Business providers

- Component and device suppliers and distributors

- Professional service/solution providers

- Research organizations

- Technology standard organizations, forums, alliances, and associations

- Technology investors

- Investors (private equity firms, venture capitalists, and others)

Report Objectives

- To define, describe, segment, and forecast the size of the US AI inference market, in terms of value, based on compute, memory, network, deployment, application, end user, and region

- To forecast the size of the market segments for four major regions—North America, Europe, Asia Pacific, and RoW

- To define, describe, segment, and forecast the size of the US AI inference market, in terms of volume, based on compute.

- To give detailed information regarding drivers, restraints, opportunities, and challenges influencing the growth of the market

- To provide an value chain analysis, ecosystem analysis, case study analysis, patent analysis, Trade analysis, technology analysis, pricing analysis, key conferences and events, key stakeholders and buying criteria, Porter's five forces analysis, investment and funding scenario, and regulations pertaining to the market

- To provide a detailed overview of the value chain analysis of the AI inference ecosystem

- To strategically analyze micromarkets1 with regard to individual growth trends, prospects, and contributions to the total market

- To analyze opportunities for stakeholders by identifying high-growth segments of the market

- To strategically profile the key players, comprehensively analyze their market positions in terms of ranking and core competencies2, and provide a competitive market landscape.

- To analyze strategic approaches such as product launches, acquisitions, agreements, and partnerships in the US AI inference market

Available Customizations

With the given market data, MarketsandMarkets offers customizations according to the company’s specific needs. The following customization options are available for the report:

Country-wise Information:

- Detailed analysis and profiling of additional market players (up to 7)

Need a Tailored Report?

Customize this report to your needs

Get 10% FREE Customization

Customize This ReportPersonalize This Research

- Triangulate with your Own Data

- Get Data as per your Format and Definition

- Gain a Deeper Dive on a Specific Application, Geography, Customer or Competitor

- Any level of Personalization

Let Us Help You

- What are the Known and Unknown Adjacencies Impacting the US AI Inference Market

- What will your New Revenue Sources be?

- Who will be your Top Customer; what will make them switch?

- Defend your Market Share or Win Competitors

- Get a Scorecard for Target Partners

Custom Market Research Services

We Will Customise The Research For You, In Case The Report Listed Above Does Not Meet With Your Requirements

Get 10% Free CustomisationTESTIMONIALS

Growth opportunities and latent adjacency in US AI Inference Market